Global Notes

Total Reading Time for all Notes: -- Minutes

I have lots of ideas as all INTPs seemingly do, but not enough time to write about them formally. In that case, this serves as my insanity diary for things that would borderline diagnose me with insanity or alternatively being a conspiracy theorist.

You'll find my notes in here to be quite idealistic, and probably think they are crazy. That's because I'm unfortunately not a normal sane person, and the evidence for that is in these writings below.

Actors vs Locks (Performance)

Some may have watched a recent PointFree episode that detailed a performance comparison of actors and locks on a given cache. The gist of the episode shows that when it comes to individual calls, locks perform faster, but when you can group actor calls into 1 isolation hop then the actor performs faster.

ie. (Based on the episode code examples)

final class MutexCache {

private let entries = Mutex<[Int: Int]>()

func set(_ key: Int, value: Int) {

self.entries.withLock { $0[key] = value }

}

}

final actor ActorCache {

private var entries = [Int: Int]()

func set(_ key: Int, value: Int) {

self.entries[key] = value

}

}

let actorCache = ActorCache()

let mutexCache = MutexCache()

// 1. Mutex Cache is Faster

for key in 0...10_000 {

mutexCache.set(key, value: key + 1)

}

for key in 0...10_000 {

await actorCache.set(key, value: key + 1)

}

// 2. Actor Cache is Faster

extension Actor {

func run<T>(_ fn: @Sendable (isolated Self) -> T) -> T {

fn(self)

}

}

for key in 0...10_000 {

mutexCache.set(key, value: key + 1)

}

await actorCache.run { actorCache in

for key in 0...10_000 {

actorCache.set(key, value: key + 1)

}

}

Intuitively, this makes sense since each call to set on

MutexCache will have to acquire a lock, whereas all the

calls to set on ActorCache can be grouped into

a single isolated access (which effectively removes the overhead).

However, there's more to this, as right now the MutexCache

embeds a lock internally, but what if we did this instead.

final class NonSendableCache {

private var entries = [Int: Int]()

func set(_ key: Int, value: Int) {

self.entries[key] = value

}

}

let nonSendableCache = Mutex(NonSendableCache())

Now, you can see that we made a simple cache that was non-Sendable,

which means that we don't even have to worry about concurrency in its

implementation. Then, we create a local variable that wraps the

non-Sendable cache in a Mutex. This allows to do the

following:

nonSendableCache.withLock { nonSendableCache in

for key in 0...10_000 {

nonSendableCache.set(key, value: key + 1)

}

}

This is essentially the same thing as calling the run

helper on ActorCache, but for mutexes instead. Now because

we only have a single grouped access from both ActorCache

and our NonSendableCache, the performance is essentially

the same for our benchmark operation. Since the number of isolation

hops/mutex acquisitions is 1, the overall synchronization overhead is

practically negligible.

However, the benefit of NonSendableCache is that we also

get to use it inside an actor if we want to defend it, and everything

is still all well and good.

final actor ActorCache2 {

private var cache = NonSendableCache()

func set(_ key: Int, value: Int) {

self.cache.set(key, value: value)

}

}

So really, the lesson here is that non-Sendable code will avoid any synchronization overhead by default, and allows us to defer Sendability concerns to the minimal points where it's absolutely necessary. I wrote about this here.

— 4/26/26

Terms of Time

Most would say that 2-3 years is long term, I disagree. 2-3 years is very little, and I consider it to be short term. Generally, this is the frequency in which we get a new tech trend, with agents being our current one. Furthermore, cultures only shift slightly in this timeframe (mostly toward the incremental trend). Larger culture shift itself takes a lot longer, and is necessary for invention to prosper.

For me, long term has to mean decades, because that’s generally how long it takes for a culture to completely adopt new ways of thinking (and for the old generation to start yelling at clouds apparently) required to successfully adopt new inventions. Don Norman notes that such a process is roughly 20-30 years (eg. See the HD TV example from The Design of Everyday Things), and this is also the timeframe that Alan Kay and the rest of Xerox PARC operated on with respect to their research (eg. Dynabook was sketched in 1968, laptops came in the 90s, and the iPad released in 2010).

Another aspect about focusing on the 20-30 year timeframe is that it becomes essentially impossible to predict the state of that future from today. If I were to ask you what programming would look like in 5-10 years, you would probably answer around agents and AI taking over things. However, you wouldn’t answer with that if I changed the timeframe to 30 years because our culture would be entirely different then. Instead, the only way to predict that future is to literally invent it if we want to adopt the Alan Kay way of saying things. That is, long term predictions are much more about realizing visions rather than trying to predict where the current trajectories will take us.

This leads us to the nature of those visions. You may think that it would be ideal to draw some sort of futuristic technology, and call it the vision. This is fine for the short (0-5 years) or medium term (5-10 years) scale, but reminder that the difficulty is dealing with the culture. Therefore, I would stand to think that the vision needs to be based around an ideal culture instead of an ideal technology. For Doug Engelbart, this was the notion of augmenting intellect, boosting collective IQ, and improving our ability to improve (if you read his 1962 paper, computers are not the central focus).

— 4/21/26

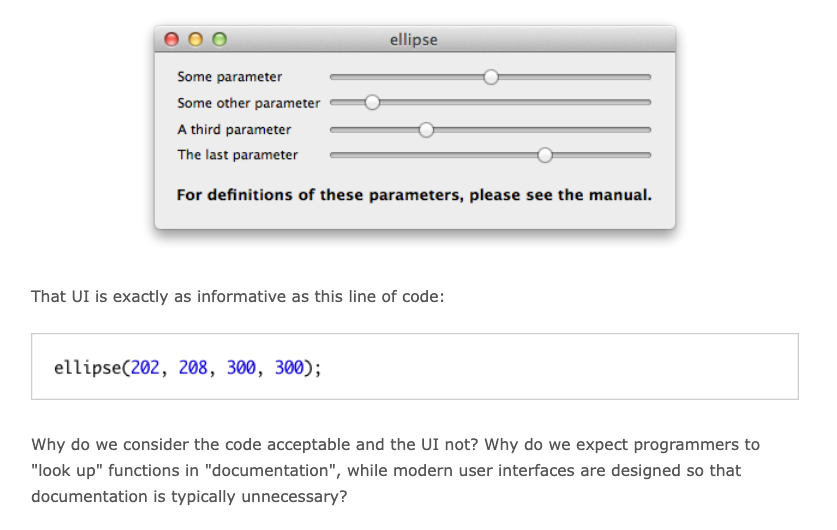

Clean Code is Good UI Design (4/N)

If you know ARM NEON, you would know immediately what the following code does.

void f(__fp16* out, const __fp16* values, size_t values_size) {

for (size_t i = 0; i < values_size; i += 8) {

vst1q_f16(out + i, vaddq_f16(vld1q_f16(values + i), vld1q_f16(out + i)));

}

}

However, I assume most of you reading this don’t, so here’s what it actually does.

void sum_all(float* out, const float* values, size_t values_size) {

for (size_t i = 0; i < values_size; i++) {

out[i] += values[i];

}

}

This really shows that the lack of understanding came from the fact

that the “UI” for the NEON version looks quite cryptic for such a

simple operation. That is, one doesn’t know what

vst1q_f16 means unless they’ve read the ARM NEON ISA.

Furthermore, if you want to make the NEON version handle sums properly

when values_size is not a multiple of 8, you would need

to add the scalar section at the end to handle the remaining values. I

didn’t include that bit in the first NEON example because it would’ve

given the answer away.

void sum_all_neon(__fp16* out, const __fp16* values, size_t values_size) {

size_t i = 0;

for (; i + 8 <= values_size; i += 8) {

vst1q_f16(out + i, vaddq_f16(vld1q_f16(values + i), vld1q_f16(out + i)));

}

for (; i < values_size; i++) {

out[i] += values[i];

}

}

Another thing worth noting is that you would generally not write NEON code like that as an isolated function, but rather as a part of a fused mathematical operation in practice.

void fused_op(__fp16* out, const __fp16* values, size_t values_size) {

size_t i = 0;

for (; i + 8 <= values_size; i += 8) {

float16x8 vals = vld1q_f16(values + i);

float16x8 out_vals = vld1q_f16(out + i);

// Other math ops that use vals and out_vals...

vst1q_f16(out + i, vaddq_f16(vals, out_vals));

// Other math ops that use vals and out_vals...

}

for (; i < values_size; i++) {

// Handle remaining values via scalar loop...

}

}

There are a whole host of reasons for why that is, but the issue is that such reasons are completely invisible to you whether or not you write code by hand or through an agent. Agents, from my experience, unfortunately tend to fall for the human tendencies of microbenchmark optimization (at the expense of E2E performance) when it comes to these kinds of things, so it isn’t of much help on its own. So even the agent can have blindspots despite having far more trained knowledge than you ever will.

In nearly all tools that deal with code today (Text Editors, IDEs, Agent CLIs, Github, etc.), context about the production environment is essentially invisible from within the tool itself. That is, all code is displayed with the same visual hierarchy, regardless of how critical or uncritical it is. This means that the context has to live somewhere else, which is often one’s head or in model context/weights. I wonder how well that’s worked so far.

— 4/20/26

Plugins

My recent notes have mentioned a “universal medium” for apps to communicate with each other. However, some might ask, why not design a plugin system for your app? I’ll have to refer to the term “plugin” in the sense that it seems to be defined at large, which is “an extendable module to a particular application”. Technically, the “universal medium” could also be described as a “plugin system”, but not in the sense that we typically think of plugins so it’s best to stick with the at large definition for this.

For the record, I don’t think the idea of plugins are a bad thing, but they are not a solution to the general application silo problem. Plugins can be fine under the following conditions:

- The API/architecture supporting the plugins is designed for maximal customization.

- The plugin is specifically designed for the application that exposes a plugin system.

The main issue is knowledge, particularly that the plugin author has to know the application that it’s interacting with. The real idea in breaking the application silo is to make it possible for multiple apps to communicate without knowing about each other or their intimate details. This is fundamentally impossible with plugins in the way they are adopted at large.

This knowledge problem is also an issue with how we interact with web-services as well. For instance, you may have your app call OpenRouter, but what if another service comes along that’s better somehow? In today’s world, you would have to manually (or get your agent to), deploy an update to switch to the new service. This is because somewhere in your app there’s a hardcoded URL string that points to OpenRouter directly, so if you want to get around that you need a service discovery mechanism. Thankfully, correlation and pattern matching are what AI tends to be good at.

Now, at some point you will have to know at least one of the thing’s you’re interacting with. That is, you will have to have a hardcoded interaction with something (URL, file, database, etc.) to get the process of discovery started. However, that something ideally is a mechanism for finding “all the things” you need, and therefore you limit the number of hardcoded interactions in exchange for dynamic ones.

— 4/15/26

How do we break the Application Silo?

Recently, I wrote about the need for the “next killer effort contributed to by everyone”, but the only way that’s going to happen is if we stop thinking about software as winner-take-all monoliths. For every 1 winner-take-all monolith made by a handful of people, the other thousands of players are immediately sent into retirement never to compete again. Thus, the monolith is forced to grow bigger in both features and complexity as everyone is forced to adopt it.

On one hand, the monolith becomes very capable due to the fact that it must grow its featureset to match the expectations of users. On the other hand, it’s complexity grows boundlessly, eventually requiring armies of engineers, contributors, and now agents just to maintain it all. This, alongside the fact that many users have become dependent on the monolith, means that progress slows and stagnation begins. Perhaps, once the source code leaks this truth is revealed, but instead we’ll often pretend like nothing happened because Garry Tan just announced that he hit a rate of 100k LOC per day. Just kidding, but G-Kernel when? (It would only take 810 days at his actual current rate.)

This is why it took LLMs to break the stronghold that traditional IDEs had on developers for decades. Throughout that period IDEs were stagnant with only minor incremental improvements year-over-year, and the cost of creating and distributing a new one was extremely high such that no one but the already established players could do it. Furthermore, the established players didn’t want to take the risk of creating something qualitatively different, so they never did and thus stagnation.

The way to defeat this is not to build more monoliths, but rather to

build smaller and more well round components that can all interact

with each other. For instance, I’ve been enjoying cmux as of late, but

I don’t care about its built-in browser and rarely use it. The reason

for this is because I already use an actual browser (Zen), and I would

rather use Zen than a bolted on WKWebView. However,

there’s no way for cmux and Zen to talk to each other, which is why

cmux added a built-in browser.

So the question then becomes one of what the communication protocol looks like rather than one of “breaking the application silo” (that’s already been answered). There have been many of these that have been proposed or implemented, but all don’t solve the problem directly for all sorts of reasons. This is primarily because those solutions (Smalltalk, Etoys, etc.) tend to create their own isolated world with their own isolated constructs. This is great for purity, especially if the intention is to redesign something qualitatively different, but unfortunately doesn’t change the current state of things.

To change the current state of things, you’ll need 2 things. One of which is a technical problem, but the second and bigger one is a human problem.

- A variety of transports (HTTP, Websockets, In-Memory, etc.) for the “messages”, and constructs for the “objects” (any existing runnable OS process or code).

- People to universally adopt the protocol.

The second issue is why systems like Smalltalk haven’t been adopted at large, and also exactly why we still have this problem. Monoliths have been normalized to the point where very few will ever perceive of an alternative. That is, the application silo isn’t seen as a problem in the first place, and won’t ever be if we keep building the way we currently are.

I have to say that starting a system like this to interop with existing applications is inherently starting from a non-idealistic point of view. Truthfully, if one wound the clock back enough to the 80s, it would’ve been more ideal to design today’s systems with this view upfront, but I would still say that some progress can be made by starting from our current point. That is, it can hopefully become a comfortable step from a tricycle to a bicycle.

— 4/9/26

Observations on 3 Months of Agents

It’s been about 3 months since I started seriously using agents in my workflow, so it’s time to share some thoughts.

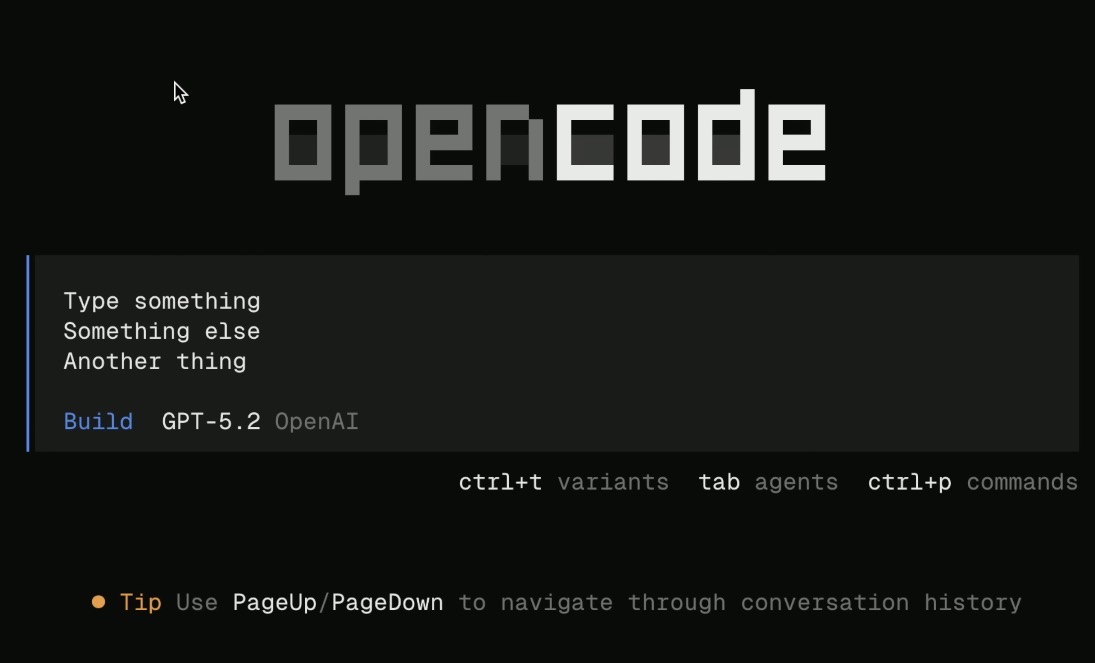

- OpenCode using GPT 5.4 as my primary model. Occasionally MiniMax to see the progress of open weight things, and for smaller tasks.

- 3-4 agents in parallel seems to be the sweet spot for productivity for a focused session.

- I’ve been able to build internal tools for various needs faster than ever before.

- I read the code.

-

I still like handwriting code, just not boilerplate or glue as much.

- The parts that feel more artistic are the parts that I still opt to handwrite.

- For lower-level things, agent code often isn’t good enough on it’s own, though it may get something started.

- Neither do I think my handwriting skills have atrophied. Writing more glue and boilerplate code does not keep your skills sharp, but rather taking on actually challenging things does.

- I still think handwriting will be around for the next few years, but eventually I do believe it will be completely suceeded by future tools in the distant future.

-

Agents tend to optimize for microbenchmarks.

- If you tell the agent that a function is performance sensitive, it will try everything in it’s power to optimize the one function without considering the surrounding environment (especially hardware characteristics).

- Eg. Introducing a 4kb lookup table for a calculation that runs once in a logit sampling kernel.

-

Agents make ~3-4 subtle mistakes per 1000 lines of code.

- You will need a review system in place for this reason. Codex code reviews seem to catch some of these mistakes, but I haven’t yet tried a dedicated code review tool like Greptile or Code Rabbit (Copilot review doesn’t seem to give useful suggestions on PRs that I’ve seen from various open source projects).

-

Mostly writing Swift and TypeScript (professionally + free time),

however a bit of C++ (free time) and Go (internal tool) has also

been written as of late.

- Swift tends to be the biggest struggle (especially for MiniMax).

-

I don’t feel like I’ve lost too much identity or meaning, primarily

because architectural design was always a fun part (and now it gets

more focus).

- As stated before, I still like handwriting artisinal code, but boilerplate and glue are not artisnal code.

- I also work with a small creative team, and like my environment. If I were in a soul-sucking enterprise where being able to write code was the last level of any work satisfaction I had, then maybe I would be quite depressed.

- PRs have gotten larger.

- Team discussions have shifted away from specific codebase details to more design/business related topics.

-

Constant refactors are necessary, because agents love large and

verbose functions.

- This has always been a thing, but now you must remain on top of it earlier in a project life-cycle.

- Agents tend to write more verbose code (eg. for-loops instead of using more concise collection transforms or other monadic behaviors), and you can often condense their output if you rewrote by hand.

-

I find myself branching out into more areas of software.

- Mobile is one of the categories that more and more vibe-coders are entering. I have nothing inherently against vibe-coders provided their motivations are for the greater good, and I’m happy with the fact that more people have the chance to create something.

- However, if a vibe-coder can do much of what I was previously doing, then I would rather do something that requires more expertise. This has caused me to seek out contributing to lower-level projects like inference engines.

- That being said, I will still be working on apps in the future, but the nature of them will inherently involve more technical complexity as a result. In one sense, this can help with building standout features. On the other, it can make me lose sight of what I’m actually creating, so care will need to be taken.

- From a larger-scale business angle, I’m also not sure that just a mobile prescence will be enough going forward since the cost of building mobile apps is lower to the point where anyone can do it.

- This branching sort-of started early last year when I began working on libraries instead of apps in my free time. In fact, I would say that a good library is harder to get right than many apps.

-

Trust from the marketplace is definitely at an all time low.

- Take Reddit for instance, where a few years ago posting an ad for a good app in relevant subreddits was generally seen as something valuable.

- Since the cost of building is now much lower, many people are spamming ads for their vibe-coded app on various subreddits, which has caused lots of backlash (especially given the lower regards to quality).

- This makes it more strenuous to engage with communities, because now there’s more upfront time cost needed to build proper trust.

-

Ralph Loops are an interesting technique for boring stuff.

- They’ve also been a good way to get something started. Whereby after an initial version is built, you go back to a more engaged development process.

-

Less third-party tools are necessary because it’s not as time

consuming to build stuff in-house now.

- If a library or service doesn’t handle something of sufficient complexity or standard functionallity (eg. hosting infrastructure, databases, inference, standardized algorithms, frameworks, etc.), then I find that it’s now viable to build an in-house version of said service or library.

- This mostly applies to libraries that mostly simplified syntax (eg. react-hook-form)

-

Velocity increases 10x when I’m not thinking about design or the

details of what is being generated.

- When I do care, it drops massively. In general, caring about details will drop velocity because it’s more work than not caring. This is not exclusive to agentic coding.

- I’m also not interested in a LOC generated per day contest. Anyone can easily hit 10k per day by not caring deeply about the intimate details (see this).

- Furthermore, the ability to increase production without a similar ability to increase visibility and understanding has historically created many second order problems. (eg. Injuries + health problems + work conditions caused by the Loom requiring labor laws to prevent further harm during the industrial revolution.)

-

Thinking and understanding is more important than ever.

- This is a new black box, a new layer of abstraction, and thus a new generation of people who have no idea how things actually work will awaken.

- Everyone is now yet another step removed from something.

- Part of that something was bad, and thus it’s good that we’re removed from it.

- However, part of that something was necessary, and now that we’re removed from it the meaning is lost.

In essence, we have a new tool that increases productivity. Yet, like other such production tools that came before it, it does very little to boost understanding of what is being produced. Therefore, the understanding part is largely an exercise left to the wielder that can easily be, and is often deliberately, ignored.

— 4/1/26

Theory and Practice

Inherently one would consider this note theoretical because it discusses ideas instead of being directly applicable to the “real world”. This is ironic, because the act of writing, or otherwise producing abstract symbology to describe rather than do is what creates this distinction.

What further makes this annoying is the idea that “In theory, theory and practice are the same, but in practice they are not.” Therefore, if I mention that the 2 ideas are the same thing in this note, we’re still stuck in theory. In fact, I already referred to them as ideas, which inherently puts them on the same base playing field. If I instead referred to them as “truths”, then once again we put them on the same base playing field.

Another annoyance is the idea that the “real world is messy”, and therefore practical solutions must also be “messy” because of this. This prevents me from creating a simple vacuum to show how theory and practice relate to each other. Of course, this writing is already such a simple vacuum, but so is a conversation, social media post/comment, any form of communication, etc.

I suppose you can see my annoyances with this line of thinking, and what makes things worse is that we tend to dismiss the theoretical entirely because of it. However, it’s also my observation that the best practitioners that I’ve read in the art of Software had a great grasp on both “theoretical” and “practical” ideas, whereas the worst only tend to focus on technologies and frameworks deemed as “practical”.

In terms of economic value, many would say this distinction between theory and practice is massive, and will always use it to lower the value of education (theoretical). However, it’s often those who manage to apply complex theoretical ideas in practice that make the most money, and this is especially true today in the AI era. In fact, the theoretical complexity is often used as leverage! 99% of the population has never heard of a “transformer”, or probably don’t even understand what “deep learning” means at even a basic level. Yet, a growing number of those people are using and becoming dependent on Claude and other models every day.

To those people, learning “AI” means learning how to use Claude to get work done, not learning the underlying principles of deep learning. I’ll be countered here with this, “If you don’t work in machine learning, then why would you spend time learning deep learning principles? How are those principles practical for my purposes?” One response could be that it’s great to always have deep knowledge of things regardless, and therefore if you have the chance and inclination, then you should put the learning hours in.

On the other hand, most people don’t think that way, so I’ll observe that the best practitioners tend to know a great deal about at least what happens 1 abstraction layer below their practice. That is, people who know how to code will likely use agents better than those who don’t. Furthermore, those who understand deep learning can tell when it is being used for both good and evil, which may or may not align with the general public’s perception of the matter.

For those reasons above are why I don’t like the distinction between the theoretical and practical. Then the main question becomes, how do we bridge the distinction?

To that, my answer is that we look at communication. No, I’m not going to say that everyone needs to work on their communication skills, though it can’t hurt. Rather, I’m looking at what mediums are available to us to communicate ideas. Generally speaking, if a typical person (ie. not one that likes abstract ideas) cannot see, feel, or experience an idea in a form understandable to them, it might as well be both invisible and useless to them.

This is in spite of the fact that such an idea may indeed be useful to them in the long term, however it’s understandably quite difficult to perceive the long term (even for smart people). By long term, I don’t mean a handful of months or a few years, but rather decades. This is probably why the only successful predictors of the long-term future have been from inventors themselves.

So the right questions to ask would be related to whether or not writing, social media, video, photos, etc. are the right way to communicate long-term ideas in the first place (this note is ironic in that sense). In my view, given how each of these tend to lack context of some kind (eg. Writing lacks visuals, visuals lack precision, social media lacks any context whatsoever), I would say there’s quite a bit of work to be done here. We need to make the invisible visible, otherwise the rest of the world doesn’t care.

— 3/27/26

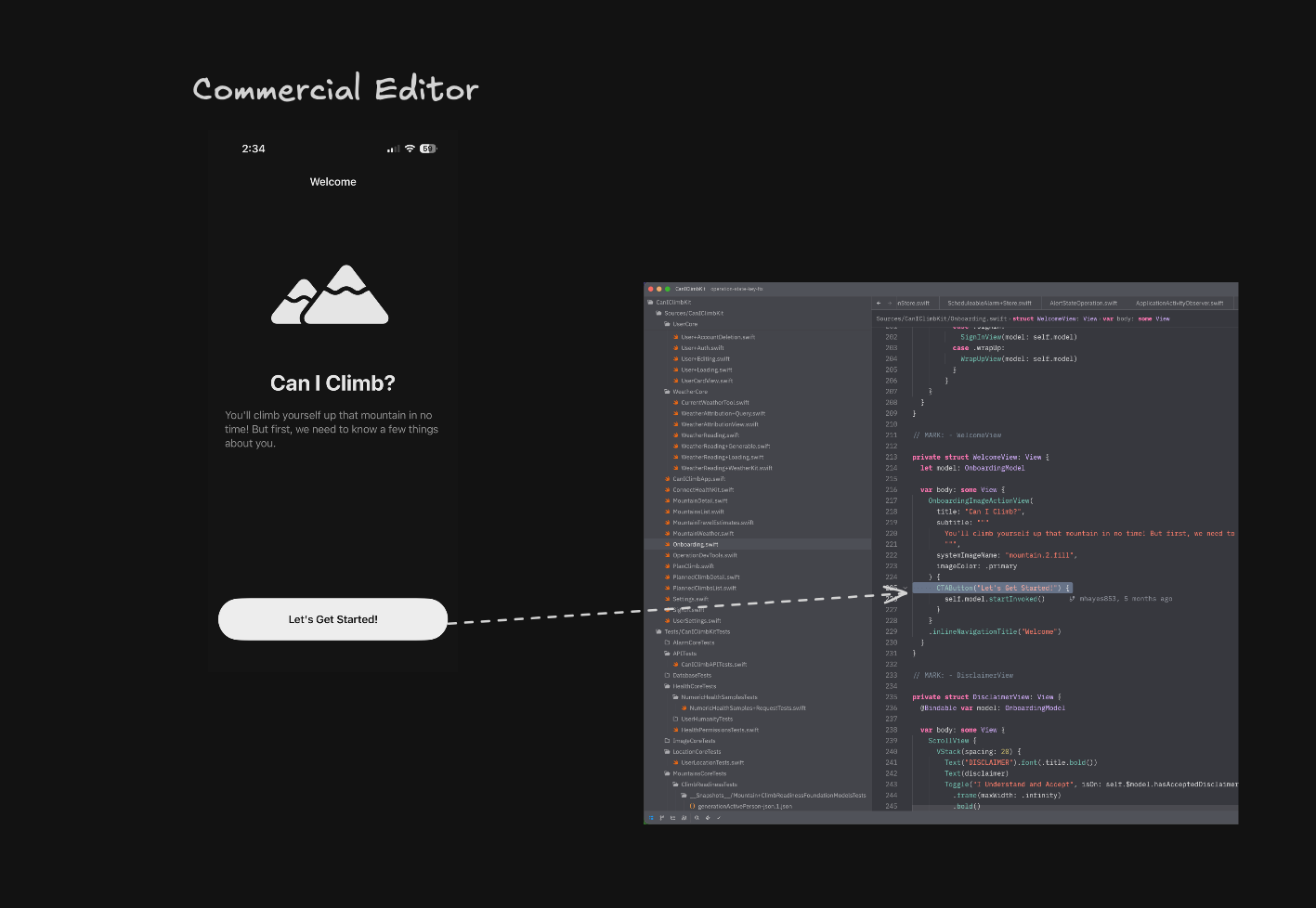

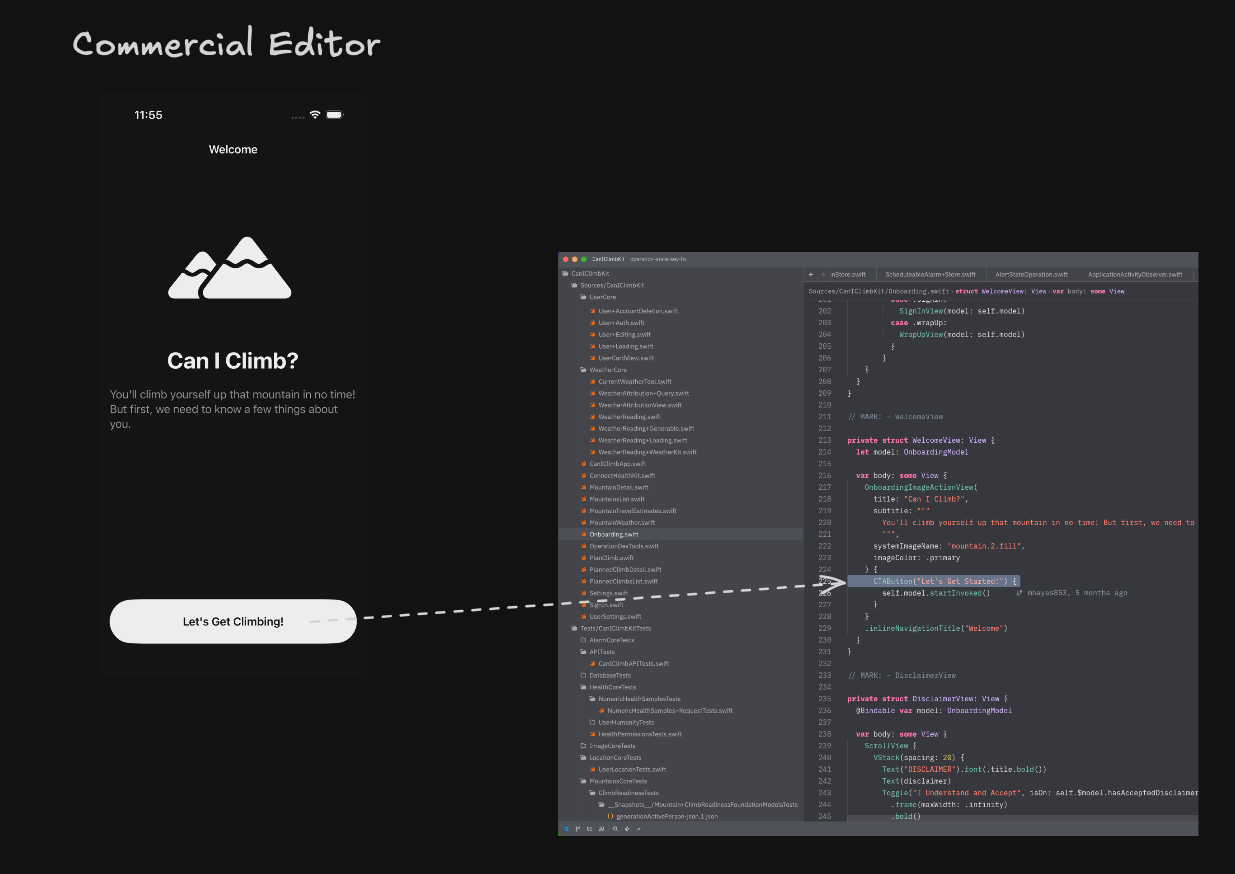

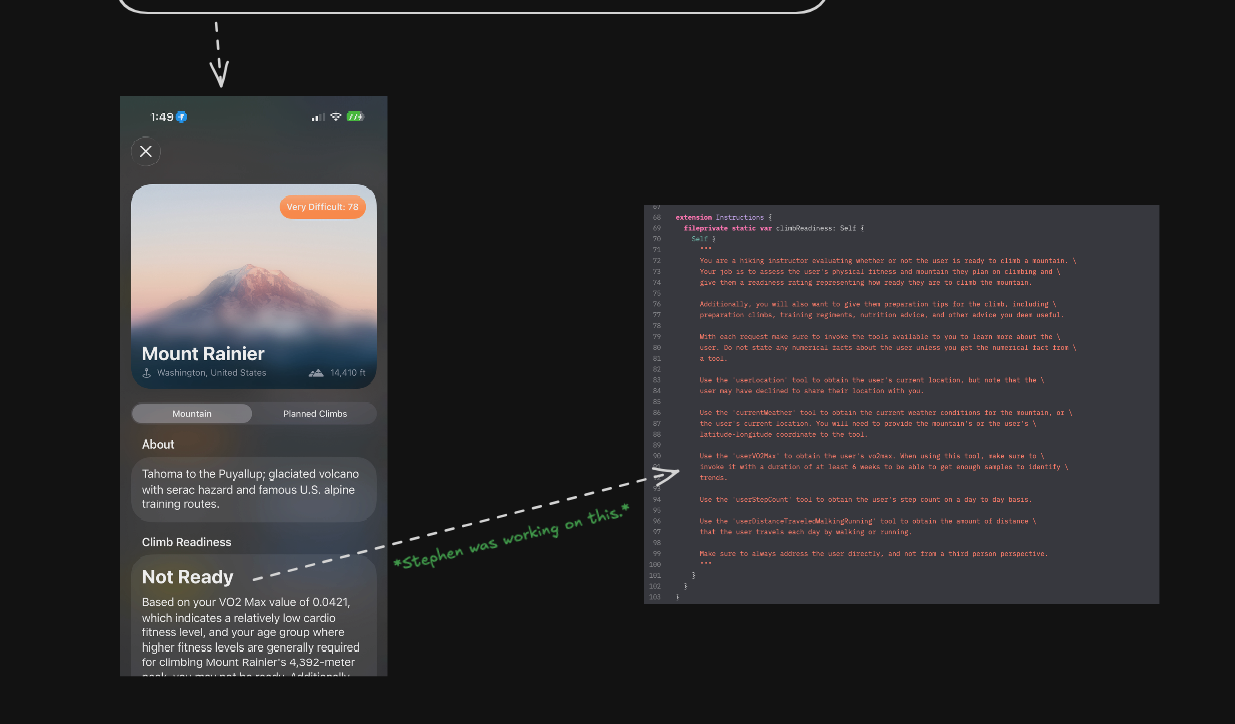

Notes on a Better Commercial Editor (2/N)

The first part of this was written before many people began writing most of their code with agents, and more-or-less around the time when many were realizing what agents could do yet still writing most of their code by hand. Nowadays, outside of the most low-level and performance critical stuff, I find this dynamic to have massively changed. In many such cases, I’ve found the only times where handwritten code is faster to write than agentic code is for simple line changes. This is especially the case for typical UI code which tends to be quite hacky and unstable in general.

That being said, there’s now more interest than ever in creating better editors and tools for this new paradigm. T3Code and cmux are very recent attempts that come to mind, and I’m sure more in a similar vein will come about in the future.

In past notes, I’ve also not been favorable towards TUIs despite using OpenCode and Ghostty daily. I should rephrase my sentiment I suppose. What I dislike about the terminal as a basis for everything is a lack of feedback when typing commands, or in other words “flying blind”. If a TUI can offer such feedback, or otherwise be useful in the same ways as a GUI, then for all intensive purposes it’s essentially the same as a good GUI. That being said, if a GUI offers no such feedback either, it’s essentially a terminal (like ChatGPT). So really, the bigger idea is exploration and learning through real-time feedback (game design emphasizes this point).

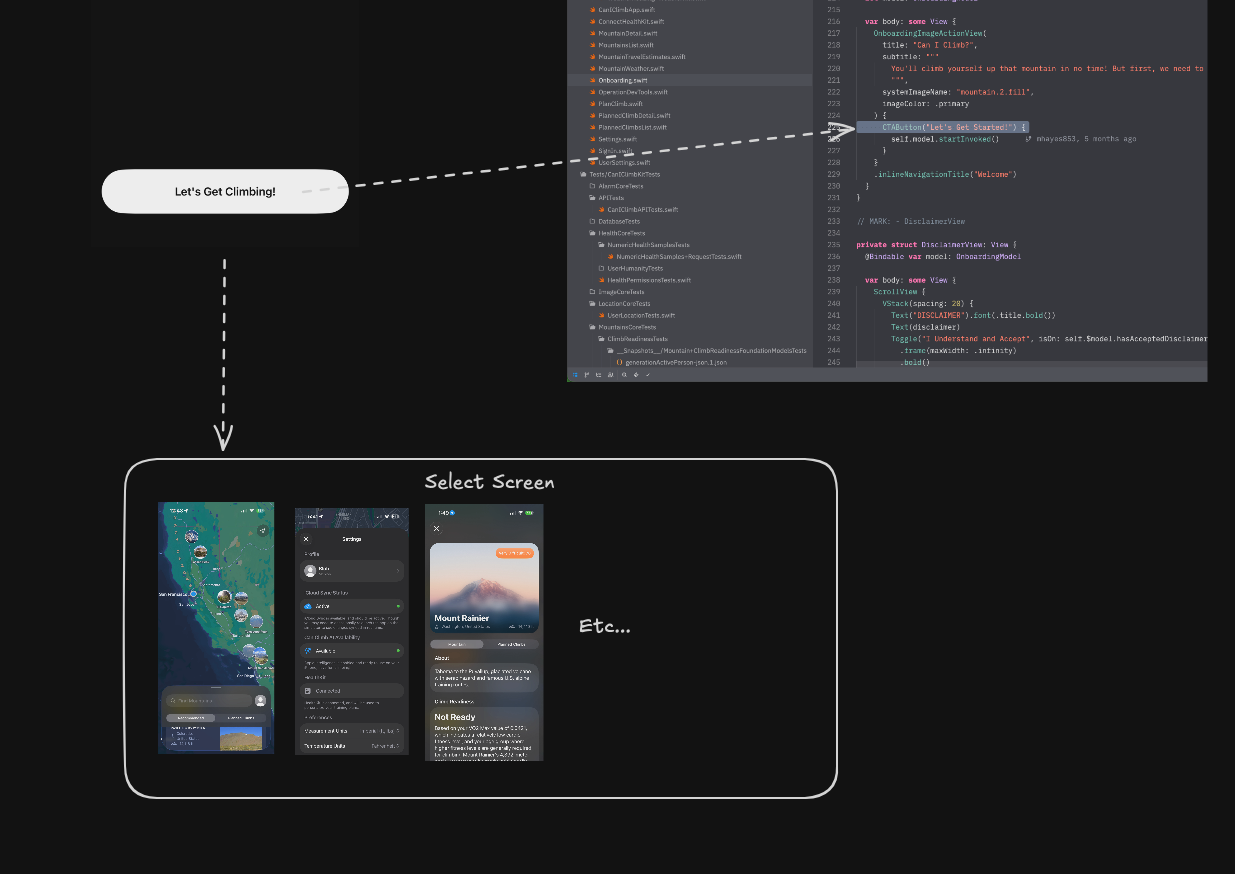

However, I still don’t think any existing solutions are approaching the mark for what is actually needed. From the first note in this series, the “collaborative programmable whiteboard-like space approach” with arrows to represent relationships is still something I think could be a step in this dirction if it was able to be a communication system between various different apps. Tools like cmux also show a hint in this direction as well by not imposing any specific app in their layout/notification system. That is, there’s no “built-in” agent harness, and you can choose to use a dedicated one like OpenCode, Claude Code, or Codex depending on what you prefer alongside any other TUIs/CLI tools you use. Rather cmux acts as the binding force between those tools.

In the short term (ie. 1-5 year timescale, real “long term” IMO is decades), I think this direction is better than monolithic apps that bake a bunch of features into them. That is, if we’re not talking about any escaping the 14-inch folding rectangle on my lap kinds of approaches (AKA a topic for another time), which is why I had to clarify that I meant “short term”.

That being said, I think we can do better than traditional

side-by-side layout when it comes to having multiple apps on screen.

The relationional arrows from my whiteboard approach in particular are

a concept borrowed from project Xanadu. Notice how the arrows are not

unidirectional, and instead represent a 2-way binding between parts of

documents. I think this aspect in particular could be used between

apps to mention related content.

This later particular image is of a 2007 prototype called Xanadu

Space, which does the Xanadu UI in 3D thus adding more depth.

This later particular image is of a 2007 prototype called Xanadu

Space, which does the Xanadu UI in 3D thus adding more depth.

Another aspect encoded in the first design was realtime feedback from each and every action. While of course, this was an idea largely developed in the 60s and 70s (especially so for the idea of live programming), some of Bret Victor’s work pre-Dynamicland (circa 2010-2013) is quite infamous for this. Though Dynamicland has naturally expanded upon it into the physical world. Regardless, my favorite talk to this date has some examples of this in the digital realm. I should also mention that the reason for the talk being my favorite is not because of the demos that show “live programming”, but rather the second half which goes into a lecture on the meaning of work. Suffice to say, I would like to stray away from such spiritual topics in this note.

One of the biggest issues that I’m seeing with much of the ongoing work towards better tools is the idea that a single app that becomes the next editor is the ideal end-state. This is quite barbaric and competetive over collaborative in my opinion. For me at least, it’s a zero-sum game if only one or a handful of monolithic tools “win” and the rest become irrelevant.

This is what happened with the previous generation of text editors before the AI boom, and as a result no new radical UI innovations came for decades until the AI boom happened. For the established editors such radical innovation was too risky, and new players couldn’t easily enter (until AI) because the incumbents already had the market share. Also getting people to change their workflows is incredibly difficult, even if it’s to something better. The AI boom is the only reason that some (many outside of traditional tech hubs are almost entirely ignoring this) people are more open to change, but it will not last forever.

I would prefer that we are not locked with a handful of standard and stagnant editor UIs for the next 2 decades because no new competitor can easily break established workflows. Now is the our window to break much of the traditional application silo as we can. It’s true that most apps will remain monolithic, because breaking the silo requires a complete mindset shift that I’m not sure is easily possible at scale in a time frame of a few years. However, we can at least make things better than they have been in the past.

A great example of this kind of system from a UI perspective can be found in Alan Kay’s tribute to Ted Nelson. In today’s world, one would need to write or generate thousands of lines of code to make a dedicated app for what is seen in the demo. However, nearly zero new lines of code need to be added in a Smalltalk system to achieve the same functionallity.

Fun fact, the entirety of Smalltalk-76 was only ~10,000 lines of code. Not because the programmers were necessarily Carmack-tier, or because Smalltalk was such a great textual language. Rather, the system was designed such that few lines of code needed to be written in the first place. There was no separation between the GUI and programming itself, just dragging things around constituted “programming”. This was in 1976 by the way.

The key idea that is shown in the Smalltalk system from Alan Kay’s tribute is that there is a way for each object to communicate with each other without direct knowledge of their existence. This way, one can focus on building “separate apps”, but still have them all communicate together in one cohesive and hyper-personalized experience. UNIX is somewhat an example of this in terminal-land, but an ideal solution is more for traditional UIs (and even TUIs). Fun fact, an ancient (2005) Bret Victor paper actually describes what such a communication system could look like for GUIs. However, I’m sure we can make a more advanced one in today’s world that isn’t named MCP. MCP is only an agent protocol that has to be conciously invoked by an agent, where the agent has to know the tools available to it. We need something much more event-driven, where no 2 apps need to have explicit knowledge of each other in order to communicate.

One could argue that OpenClaw is fulfilling this “communication” need between different apps, but if that were the case we wouldn’t be having this discussion. OpenClaw is more focused on “doing things” rather than “understanding things”, which is why most of us are still writing code with tools like Codex, Claude Code, Cursor, OpenCode, etc. What I actually think we need is the inverse of OpenClaw, which implies an explicit focus on “understanding” (which in turn would aid us in the “doing” part).

This would imply less apps that bundle an agent panel, diff viewer, browser, terminal, code reviewer, etc. into 1 monolithic app. Rather, those would all be separate (and possibly developed by separate individuals and teams), but in turn would focus more efforts on communication with each other.

To give a simple model of thought, imagine if OpenCode could directly communicate with whatever browser you’re using, no matter if its Zen, Firefox, some chromium browser, Safari even (lol Apple would never), etc. and vice-versa? Today, either OpenCode would have to implement a browser feature (not happening), or all of the browsers would have to implement a coding agent (more likely, but still likely not a priority feature).

However, for the case of OpenCode implementing a browser, what would it look like? You wouldn’t just be able to pull in your favorite browser because it cannot exist as a part of the OpenCode monolith.

Similarly, what does a coding agent look like in the browser? Sure, you can use the appropriate agent SDKs from Codex, Claude Code (maybe?), OpenCode, etc. but what if you wanted to use T3 Code as the agent UI for this hypothetical browser integration? You wouldn’t, because T3 Code is a separate app with it’s own design goals and not part of the browser monolith.

One quote from that ancient Bret Victor paper since for some reason I thought of bringing up a 20-year old paper of all things.

Monolithic systems are bad for software providers. In a healthy marketplace, whether of groceries or auto parts, individual providers offer components which combine with others for a complete solution. A small software provider could provide an excellent email program, or an excellent map. But only a large corporation has the resources to develop an integrated package. Once small companies can’t compete, progress stagnates.

Thinking about grocery stores for a second, of which there are many sellers within the store. While some big name food brands exist that are household names, many enjoyable ones aren’t. Furthermore, there’s no popular food brand in a grocery store that owns a monopoly on breakfast, lunch, or dinner. That is, you can make such meals by combining different food products from different brands. I don’t think the same can be said for software today, since each app wants to drag you into its own world entirely.

Is this to suggest that monolithic applications that have a more cohesive end-to-end experience by damned for eternity? Not necessarily, and if the universal app communication system struggles to model a cohesive interaction between 2 distinct applications, this will be necessary. However, if all the monolithic app does is provide a horizontal layout with a browser/editor tab next to an agent tab with no other visual interactions between the tabs, I wouldn’t exactly call that a cohesive end-to-end experience either.

I suppose, if there’s anything to build, it would be the universal communication mechanism. This is definitely something I’m interested in, but the sheer logistics of necessary adoption suggest that it will require more than just a single guy with agents and occassional free time.

Sure enough many (potentially millions) of us are in the same boat of having agents and occassional free time. It would be quite a waste if only a handful had the opportunity to be on the “winning team” that makes everyone else’s contributions irrelevant. We don’t need the next killer app made by a handful of people, we need the next killer effort contributed to by everyone.

— 3/26/26

Context-Free Grammar (CFG) Syntax Hell

CFGs are something I’ve been working with as of late, and I wanted to take moment to describe my suffering with all the various syntaxes and weird parsing behaviors.

First, for anyone getting into the topic of EBNF, just know that while there are some formal syntax definitions, the ecosystem of parsers is largely just imeplemented in a “freeform do whatever feels right” kind of sense. To this end, I’ve seen some parsers use ordered choice instead of unordered choice for alternation, PEG-style syntax, random regex/epsilon/character-class extensions. That is, there is literally no definition for what a valid EBNF syntax is, and the only thing I can say is that it depends on the parser you’re using.

In one sense, my idea of relativeness makes sense here. A parser should pick a format most optimized for its needs. On the other hand, we also have to ask if we need all of these syntax variants floating around? CFGs are a stable formalism that are a product of computer science research, so in this case I think it’s worth having a unified standard.

Some notes:

- LLMs generally use GBNF to constrain outputs.

- The W3C standard seems to be pretty common.

- Almost no one uses Wirth syntax except for the Wikipedia article on it.

- ISO EBNF is also a pretty popular standard.

- Almost all parsers extend pure BNF in various ways.

I’m currently working on a Swift library to make grammar definitions easier and flexible. You’ll be able to define CFGs in a simple and standard formal syntax using strong types, proper formalisms of CFGs, result builders, and potentially macros.

// Small Sneak Peak (not finalized)

let grammar = Grammar(startingSymbol: .root) {

Rule(.root) {

Choice {

Ref("second")

Ref("first")

}

}

Rule("first") {

"a"

}

Rule("second") {

CharacterGroup("A-Z0-9")

}

}

let language = Language {

Union {

Reverse {

grammar

}

KleeneStar {

otherGrammar

}

ConcatenateLanguages {

l1

l2

}

}

}

Then you will be able to generate the syntax for whatever grammar spec you need, including upcoming CFG support in Cactus (which will be backed by XGrammar and GBNF). Note that since there are so many syntax variations, and tools that make up new syntax, I cannot realistically support everything. That being said, you can definitely expect a wide range of supported formats.

// More Sneak Peak (not finalized)

let formatted = try grammar.formatted(style: .w3cEbnf)

"""

root ::= second | first

first ::= "a"

second ::= [A-Z0-9]

"""

let formattedLanguage = try language.grammar().formatted(style: .wirthEbnf)

// GBNF is also supported at the time of writing this...

// ISO EBNF is also planned...

// So is a generic BNF format with many syntax options...

Additionally, the formatted function has to be throwing

due to the disparity of expression support between different formats.

The library tries to convert expressions for each built-in formatter

if possible, but admittedly some expressions (eg. negated character

groups in Wirth) cannot be converted. As a result, a formatter is

allowed to throw if it encounters an unsupported expression.

Parsing is also something I would like to add in the future. However, it’s not a priority for release at the moment.

Update: https://www.grammarware.net/text/2012/bnf-was-here.pdf

— 3/15/26

MiniMax

I’m a Codex user, and GPT 5.4 is my go-to model for anything serious. That being said, it’s not good for everything, and there are times where simpler models that just get the job done are more ideal than Codex’s thouroughness. I suppose I could use Opus or Sonnet for this, but that would mean subscribing to Anthropic and using Claude Code. Unfortunately, I would much rather stay in OpenCode (or possibly a newer tool like T3 Code if it matures into something I like) to ensure that everything is as streamlined as possible with no weird TOS stuff in-between.

Since Gemini doesn’t seem to be optimized for agentic coding workflows, the only remaining options left are open weight models. Particularly, GLM, Kimi, Minimax, and Qwen have the edge in this space.

I haven’t bothered much with Qwen outside of using edge versions of it as a testing model for swift-cactus. Generally speaking, Qwen models aren’t generally that fast from my experience, at least on the Cactus engine. Furthermore, I don’t hear much talk about Qwen’s larger models overall, so I felt pulled in different directions.

That brings us to GLM 5 and Kimi K2.5 which have gotten a lot of attention. Having tried these models briefly, they both work very well, take their time, and can get most tasks done that codex can accomplish. However, I have Codex already, and I don’t need a cheaper and slightly worse overall version of it. Remember that I just want something that can perform simple things fast and well.

This leaves us with Minimax M2.5. While I wouldn’t say it’s output is as strong as GLM 5 and Kimi K2.5, it’s definitely not that far off, and can generally solve the tasks I need it for. Furthermore, it’s model size is quite small, only ~230B params compared to ~750B for GLM 5 and ~1.1T for Kimit K2.5 by comparison, which means it’s often faster given equivalent hardware. The reason I like it is because it isn’t trying to be a cheap Codex or Opus, but rather be it’s own thing entirely. It has the exact opposite qualities compared to Codex, while still having good enough output quality, which is why I think it complements Codex very well.

Furthermore, from a subscriptions/usage standpoint, it’s also very generous if you go past their $10/month plan. I actually think that most people could seriously get by on their $20/month plan. Even when running it continuously through my recent entry into ralph-looping, I found that I never came even close to hitting any usage limits.

That being said, Minimax is no Codex or Opus, and you will have to significantly hand-hold the model, especially compared to Codex. Therefore, I still recommend the $200 or even $20 Codex tier above all else first, and an open-weight model like Minimax second. AFAIK, the $20 Codex tier is actually a lot better than the $20 Claude tier for many. I also think a $100 Codex tier would actually do quite well for many since it’s quite difficult to hit the limits on the $200 tier, which therefore means one is theoretically overspending on inference (not counting subsidization).

Another question one may ask is why not just get a subscription that gives you access to all the open-weight models like OpenCode or Synthetic? My reason for this is because OpenCode will give generally give free-access to open weight models for a limited time, which means that I will always get to at least try them without paying. Secondly, I’m not interested in the other openweight competition for now. Perhaps NVIDIA’s Nemotron model will change my mind, but for now I haven’t had any issues with Minimax itself.

— 3/12/26

Meaning Per Second

When it comes to LLMs, we like to think of the idea of tokens per second almost as a measure of quality in many cases. I suppose this is similar to the focus on frames per second for graphics contexts. Though, I think what I’m noting here applies a more to the LLM case than it does the graphics case, it is applicable to the graphics case.

One interesting Alan Kay (or perhaps Xerox PARC) observation with regards to performance is the idea that the bar is the speed of the human nervous system. That is, as long as the human nervous system doesn’t notice delay, then all is well and good. For graphical contexts like those worked on at PARC, this makes a lot of sense. However, LLMs are also often paired with graphical contexts, and thus the human nervous system becomes the bar for speed yet again.

First, there are generally 2 kinds of speed metrics that we need to worry about for inference, prefill and decode. Prefill relates to forwarding tokens and building up the KV-cache, whereas decoding uses the KV-cache to generate output tokens. Generally speaking, decode is the more interesting measurement when we think about tokens per second, and especially so in the context of user interfaces. Prefilling can be hidden in the background for many applications, of which such background work can be used to significantly reduce latency to the first decoded token.

Secondly, we need to establish a base rate of speed for the human nervous system. Movies use 24 FPS as a baseline, but modern interactive user interfaces use 60-120 FPS. That being said, a user-interface is often still useable even if it dips slightly below that range, as long as the nervous system still perceives the interactivity as motion. If we use 60 FPS as a base rate, that leaves us about ~16.67ms between each frame.

Third, we need to consider what it means for a model to emit a token, and for a frame to be drawn to the screen. Each token or frame is generally used to build up a larger communication of some kind, such as the words of an essay or the strokes of a drawing. Of course, the most important substantial transfer in any communication is meaning. Without being able to convey the meaning of something, communications become misunderstood.

What this all means is that we have ~16.67ms to emit meaning in any given scenario. Translate that into 60 TPS, and we’ll see that such a speed is already relatively common for LLMs today. Therefore, we already have the means of beating the nervous system from a raw throughput perspective.

However, let’s take a moment to note the difference in output between graphics and LLMs. LLMs generally emit text, where as graphics emit pictures. This creates another bottleneck for LLMs, because human minds absorb meaning from pictures much faster than words. Today’s diffusion models are of course obviously not up to that level of speed, and it’s likely they won’t be for another few iterations of Moore’s Law (Perhaps one can buy their way into the future here similarly to Xerox PARC).

Therefore, if we want to communicate in pictures today using an LLM, our best approach from an engineering standpoint is to translate the output tokens into pictures. However, once we start thinking in terms of pictures, we stop thinking in terms of TPS, but rather a rate of meaning per token. That is, how much can a token translate to the right picture? Further, we only have ~16.67ms to do so as a base rate.

So we can see that changing the medium of communication itself brings us different design and necessary throughput constraints. Pictures as a medium have a higher throughput than words for certain kinds of meaning, but words often win when it comes to precise formal meaning. Regardless, if the point of all of this is optimization, then perhaps “meaning per second” should be the optimization slogan.

— 3/5/26

I Actually Tried A Ralph Loop

After 2 months of seriously using agents, I finally felt comfortable trying a HIL version of Ralph on a recent internal tool to do some marketing analysis for my company’s pivot. Also, I needed a test drive for my latest and quite big release of swift-cactus 2.0. (I’ll write something formal about this another time, CFG support in the main engine is still needed to get it where it needs to be…)

Additionally, a model I’ve been playing around with quite a lot recently is Minimax M2.5. A TLDR for why I like it is that it’s not trying to be a cheap Codex or Opus like GLM and Kimi, and I wanted something that wasn’t Codex for certain tasks. Regardless, this was the model I decided to use for the sake of doing so.

The tool itself was a straightforward CLI to fetch some posts from various data sources (Reddit primarily), and feed the content into LFM2-8b-a1b running locally via the cactus engine to produce suggestions and a report regarding the validity of a user defined hypothesis. Additionally, qwen3-embed-0.6b was also used as an embedding model for both vector indexing and aiding with categorization for posts. I also used this chance to play with Wax, a single-file vector database written in pure Swift, and was the primary persistence mechanism of choice. Also if it wasn’t obvious, Swift was used as the programming language.

Overall, the task was completed with about ~4-5 hours of HIL ralphing, though improvements can certainly still be made to the experience of the tool itself. Therefore, the experiment was certainly a success from the standpoint of being able to produce something that is functional.

My overall idea itself was to start in one session by creating a plan and detailed implementation specification document with the agent. This specification was one large markdown file because I wasn’t trying to build something incredibly complicated. Then, I ran another agent session which broke down that implementation spec into 17 distinct tasks listed in another document. Each task included, a title, a description, completion criteria, and a list of tasks it depended on. Since we are doing Ralph, the agent got to pick the order of task completion. (It went mostly sequential with a slight exception towards the later tasks where it actually backtracked for a bit.)

Of course, my thoughts on general software development techniques are generally mixed, and Ralph is no exception to this rule. Generally speaking, over dogmatic focus on patterns instead of systems is how you get complexity, so we have to keep that in mind at the end of the day. Nevertheless, here’s a somewhat comprehensive list that reflects my experience.

-

The code quality was absolutely terrible.

- Before listing individual cases here, I should mention that this is a tool that will be thrown away in a few weeks time, so the quality isn’t that important.

@unchecked Sendableeverywhere.- Loading the model weights from scratch every time embeddings or inference was needed, instead of keeping the weights in memory.

-

Weird Java-like patterns that don’t make sense in Swift.

- Getter/Setter methods was common for some reason.

- Writing tests for obvious things, like compiler-synthesized Codable conformances.

-

Not using actors properly, and preferring NSLock when an actor

would make things simpler.

-

In one case, it tried to use a serial dispatch queues within

the actor itself to serialize work. Even worse, it would use

queue.async, and await values viawithUnsafeContinuation. I’m not making this up…

-

In one case, it tried to use a serial dispatch queues within

the actor itself to serialize work. Even worse, it would use

-

Coupling generic logic with domain logic.

- In one case, it wrote a cosine similarity method that was private in a class. Generally speaking, I always try to extract such generic methods into a reusable place even if they are only used once.

- Etc.

-

It did a decent job separating interface from implementation via

protocols.

- That is, it was able to create mocks of things for testing which was nice.

- While it did create protocols, it often named/coupled the protocol requirements to the implementation itself which isn’t ideal.

-

It completed the work in a very short period of time.

- ~8k LOC in 4-5 hours of work isn’t bad, the same amount of code would’ve taken me at least a solid week of dedicated effort.

-

It did a decent job of implementing the isolated and mundane things.

- Reddit API Client

- Wax memory wrapper

- Cactus embedding provider for Wax

- Domain model types/simple structs

- Persistence

- Configuration reading

- Etc.

-

It did a terrible job of implementing the main tool loop.

- For some reason, it would avoid actually writing the part where the LLM was called in the main loop of the tool.

- Additionally, it avoided integrating in all of the isolated modules in the code base, preferring to use mocks in the production code.

- Suffice to say, I had to break HIL Ralph and take over manually via normal agentic coding to get it to actually build the main loop of the tool.

What did we learn here?

For one, I could probably be a better spec writer, and use Codex instead of Minimax. Also, I’m sure with more practice the overall output will become better regardless of the model choice. What I did certainly find was that many trivial modules can easily be ralphed with very little effort, however the fun parts of building are where breaking out of the loop seems to be a better idea.

Ralph gives more control to the agent than you. In normal agentic coding, you can generally get the agent to write decent code if you direct it well. (Though it will still often miss critical performance details, and make ~2-3 small mistakes per 1000 lines). However, the quality seems to go way down when you hand full control to the agent. This is ok if you limit its crappy output to a series of well-defined interfaces in your spec, so make sure you nail your higher level design decisions.

Overall, I once again think of this as a technique that’s great in the sense of graphic design to art. That is, it can get stuff done like a graphic designer, but it lacks the ability to produce incredible art.

— 3/5/26

Relativeness, Relativeness, Relativeness

There are only forces, nothing else.

Messages not objects.

Algorithms, not data structures.

Inference, not weights.

Yes indeed, forces are all bound by relativeness. Which in itself is a force.

This note was typed by Matthew as his mind wouldn’t let him sleep at 4:20 AM due to excessive thinking about a model of thinking that he claims is useful somehow. He desperately needs help, and his upcoming 45-50 minute piece on how the US Constitution, MCP, edge inference, dynamic interfaces, and the Weather in Antarctica are all alike should indicate that.

— 2/26/26 (4:26 AM)

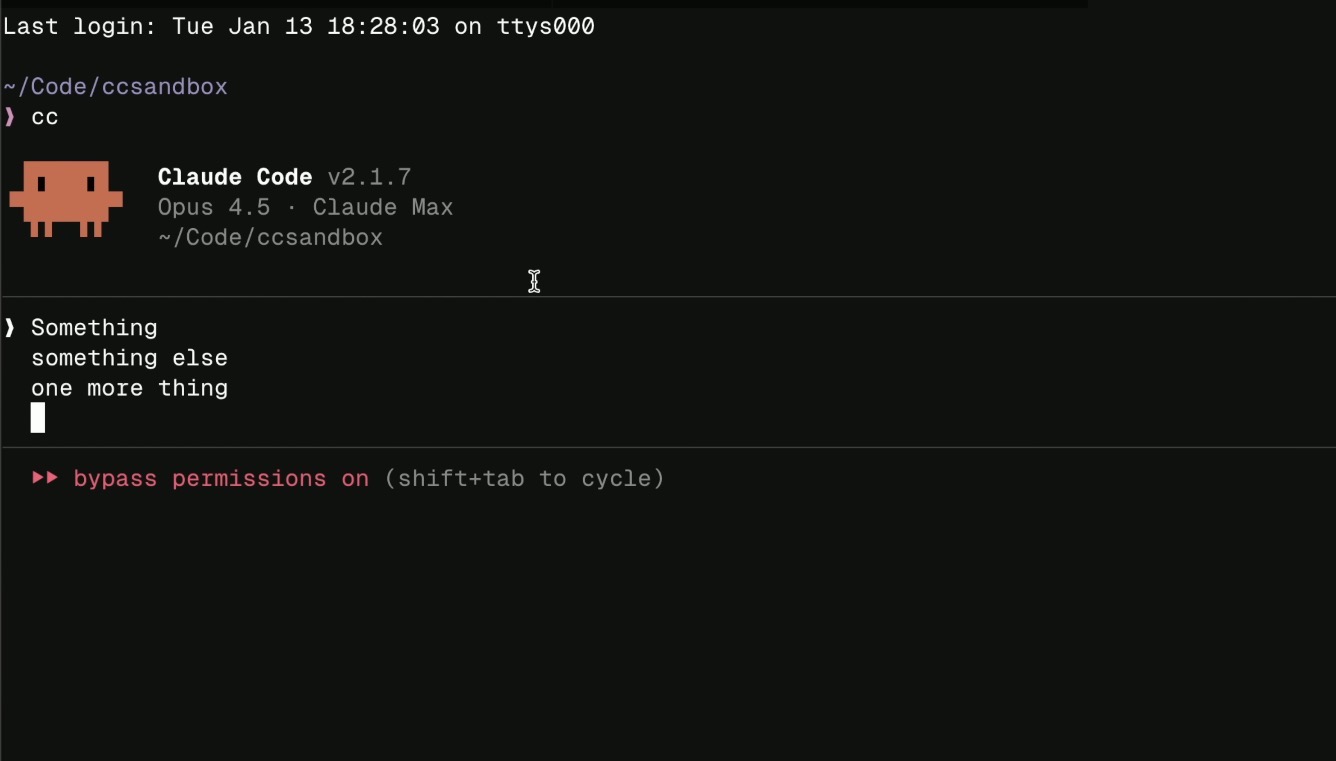

Why I don’t (and likely never will) use Claude Code.

There will be many new tools that come in the future around agents,

many will be better than Claude Code, and almost certainly we need

better tools than Claude Code. I have no interest in being shackled

when those tools are created.

There will be many new tools that come in the future around agents,

many will be better than Claude Code, and almost certainly we need

better tools than Claude Code. I have no interest in being shackled

when those tools are created.

— 2/18/26

Are Apps Dead?

If you’ve been paying attention to Peter Steinberger and the commentary around OpenClaw, a common trope is that most (80%) if not all apps are supposedly dead. For the record, Peter Steinberger comes from the iOS world himself, which I suppose counts as credibility here.

In my opinion, as someone who also does app development as a career, he’s kind of right if we’re referring to the state of mobile apps today. Most apps that just display simple information from an API are usually not the hardest things to create, and their UIs are genuinely not very inspiring. These kinds of apps can be merged into something like OpenClaw through a good API layer.

That being said, we have to remember what the purpose of a good UI is. That is, a world of exploration, not just another command center to perform some action. Apps with basic charts, graphics, tables, and lists are the kind of cases that OpenClaw can and will be able to handle in the future.

For the kinds of apps that have a more explorative UI, but not a lot of technical complexity (eg. Hardware, Machine Learning, Domain Knowledge, Technical Integrations, etc.) can be replicated by a vibe coder that has creative tastes. Such a vibe coder can also invent a UI specific to their needs, rather than being dependent on someone else’s. This alone has cut many of my side project ideas, because there’s no point in building something commercially if someone else can just vibe code it for their own needs and purposes.

Of course, most vibe coders are not very creative people, and I see this as more of a societal issue than an inherent skill issue. Additionally, most normies also aren’t going to be vibe coding anytime soon either, and will still be perpetual consumers. This means they would still benefit from an off-the-shelf solution for many things. Though admittedly, this kind of consumption isn’t typically good for improving one’s creative abilities, and in fact it will just continue to stagnate them.

I think the apps that will still be valuable going forward, will have the following 3 traits:

-

Trust

- Every business needs trust to gain customers.

-

Unique UI

- Simple UI elements of today will not be enough here. You need something immersive, contrarian, and that doesn’t fit the chat UI mold.

-

Technical Complexity

- This makes it hard to replicate your app, because this is often the part that vibe coders with no technical knowledge cannot see.

-

Machine learning, Hardware, Domain Expertise, Security,

Ecosystems, etc.

- OpenClaw from a technical standpoint is really an excerise in security, I imagine any good engineer would be able to create its core given the same time that Peter had (and a dedicated vibe coder a very insecure version). Though disregarding security, it’s codebase is ~750k LOC, and it integrates with so many different things creating a larger ecosystem that is incedibly difficult to replicate.

Generally speaking, increasing all of these 3 things in many cases requires going beyond just the app medium, and requires branching out your product further. The point is that you need to make your app hard to replicate via OpenClaw, Vibe Coding, or whatever else. Part of that comes from gaining customers, another from unique interface design, and another from deep technical knowledge.

As for myself, I find that I’m distancing myself more and more from the mobile app development label as time goes on, and agents are starting to accelerate this. In fact, the point of my work is to be increasingly general across any kind of system imaginable, apps just happen to be the current position of my career. I intend to change this as time goes on, even though app development is fun, there’s a lot more work to do outside that realm that’s also a lot of fun.

Ecosystems > Apps

— 2/15/26

Trust

This seems to be an incredibly important term in many retrospects, trust with individuals, customers, dependencies, etc. Reflections on Trusting Trust is a paper that every technically inclined person should read, and doubly so in today’s agentic age.

That being said, what does it actually mean to trust someone? For me at least, I like to think of it as an optimization if we strip away any emotional or spiritual semblance from it.

I trust the Swift compiler to produce correct assembly code, I trust Codex to write code according to my directions, I trust my teammates to keep innovating, I trust experts in scientific fields to give accurate information, etc. All of these things can go wrong, and I could learn to do each one of those tasks myself if I wanted, however it’s just more optimal for me not to.

That being said, trust is a very greedy optimization, and how trust is obtained is very different from the implications of the optimization. For instance, on a societal level, there’s a growing distrust in experts, but that trust is merely transferring to another class of experts (ie. Influencers). Influencers often gain trust by leveraging the idea that “the other side” is completely delusional in some form. This idea of “the other side” is actually a flaw carried over from nearly every society in history, which is why it’s one of our Human Universals.

Looking at the experts case, we see that many people can only rely on experts for basic scientific information. This itself presents a problem, because those same people have to vote representatives into office who make decisions on scientific policy. Often, those representatives lack the scientific knowledge themselves, and by necessity they’re also forced to trust an expert.

This is massively inefficient in the same way that scribes had to do the writing for everyone in ancient times. People had to trust that the scribe would translate their ideas into writing properly, which once again is a process that could go severely wrong. Once society embraced universal literacy, business, commerce, and culture could evolve as a result.

In my opinion, the same needs to happen with many scientific fields, and most definitely systems thinking. It would be much more convenient for ordinary citizens to design their own systems and experiments for their needs rather than trusting another individual or organization of experts to do it for them. Something-something scribes are only necessary in an illiterate society, and that’s why insurance is a powerful business model that chains many people.

— 2/12/26

Representations and Optimizations

If we are to program better in the future with Agentic tools, we’ll have to understand the notion of process more and more. One of my recent more fleshed out writings was a response to one of Alan Kay’s call to arms on the notion that “Data Structures being more central to programming than algorithms” was a deadly flawed idea. To summarize my (and possibly Alan’s) response, both of those things are merely defined representations, and really if anything should be optimized, it’s the meaning of those representations.

Of course, that answer evades directly addressing the current realities of programming in most languages today, and most others I’ve asked this question to give the more typical answers. That is along the lines of: “Good data structures make the algorithm obvious” or “The algorithm itself must use the data structures efficiently”. These typical answers are naturally something I disagree with. Picking the right data structure doesn’t mean the algorithm will form itself, because 2 separate implementations will use the data structure differently (with variances in regard to efficiencies). Static bits in memory just doesn’t maintain “meaning” well enough to scale.

For the record, I’m not only referring to basic algorithms like simple sorts where there’s always a deterministic answer (in terms of correctness). Machine learning is also something that one would consider an algorithm, but its output is almost always non-deterministic. Though truth be told, if we look at raw performance in terms of latency, even the simple sort is non-deterministic because it will run faster or slower on the CPU for any given run. In such a manner, we can say that the more non-determinism, the more chance of the meaning varying.

If you wanted to kill someone via sending a package in the mail, which option would you pick?

- Send them a bomb that explodes in their face as soon as the package is opened.

- Send them the parts that make up a bomb, and hope they assemble it themselves.

Don’t ask why I picked this example of all things… It was funny to run by a few colleagues.

Obviously, no terrorist is going to pick the second option, but the second option is rather what we decide to do today in computing.

When we look at inefficient or incorrect implementations of even simple algorithms, we’ll also find that they tend to pick the second option, rather than the more direct first. Either the parts take extra work to assemble which degrades performance, or the parts are assembled incorrectly. So “picking the right data structure”, or rather the right meaning of information has profound impacts on performance.

Now let’s talk about general human to human communication a bit. Poor communication causes incorrectness and inefficiences because either the wrong work, or extra work is performed that isn’t necessary. Generally, this is caused by poor preservation of “meaning” between the communications, so in other words picking the wrong representations.

If meaning is the center of programming, as Alan wanted to portray as a general slogan in his answer, then certainly meaning encompasses data structural representations, but also representations that are relative to something. However, If we look at general purpose programming languages today, that relativeness (I’m avoiding the term relativity thanks to Einstein) is lost to general data structures and algorithms.

What do I mean by relativeness? A simple model of this is a DSL, but really static DSLs are also quite weak. If something is to be truly relative, then it needs to be dynamic.

Take your inner social circle, and for simplicity your English speaking inner circle. Even though English is used as the DSL to speak with each person, you vary the form of English you speak with each separate person. These variances are where relativity is formed, and it is formed dynamically as you continue to speak with the person. Of course, the reason you form these variances is to optimize the manner in which you speak to the other person.

Why do we write pseudocode? In today’s agentic/LLM driven landscape, I’m going to expand the term pseudocode to include prompts that are intended to generate code.

It turns out that pseudocode is easy to write because we can keep its representation quite relative to its goal, rather than to a general purpose language. If we didn’t, the general purpose langauge would impose its constraints on the pseudocode, causing it to lose meaning in the grand scheme of things.

Of course, we also have to understand that the machine itself has its own relative representation for executing process, that being machine code. However, for us humans its quite hard to derive any sort of meaning from machine code, at least in our overall understanding of the process it represents. Obviously, this is why we have compilers that take languages more relative to us, and translate them downwards.

So really, the optimization has to be relativeness. The more relativeness, the easier to preserve the meaning and therefore efficiency of process.

— 2/6/26

Why I’m Interested in Edge Models/Inference

Apparently, just having some amount of information on the public internet that even demonstrates a slight hint towards enjoying edge models will get a few random people emailing you. Many of these emails contain the typical talking points for why edge inference is a good idea (privacy, offline, etc.). However, while those talking points are good, they are not the primary reasons I’m interested in this space.

First and foremost, my biggest concern is systems design, and the way in which people think about systems. The second of those is what I want to elaborate on in this note, because the idea of that point is to create mediums that enable better thinking.

Making people think better requires giving them framework for thought, most often that is a typical GUI, but it also concerns the design of frameworks in code. Rethinking Reactivity by Rich Harris is probably my all-time favorite frontend talk, and in it he really pushes the idea that frameworks are tools for your mind, not your code.

But let’s get back to traditional GUIs for a moment, because that is the interface most people use for technology. Take Calendar apps for example, of which we often claim as a “productivity tool”. Why is it so productive to put events on your calendar? Really, it’s because the calendar’s UI allows you to layout your daily events/schedule in a way that allows you to come to an understanding about them. This understanding is what makes you more productive.

When you use a calendar, you think a certain way. Likewise, when you use a coding agent, you also think a certain way. When you talk to someone, you think a certain way about your language and person you’re talking to.

Doug Engelbart saw this trend in particular, and spent many years researching various types of interfaces that would augment one’s thinking instead of degrading it. In particular, he extended this idea to groups of people more so than a single person, but commercialization ultimately chose the path of the individual.

Likewise, I’m interested in interfaces that are malleable, almost like spoken language. For instance, while you may speak English to 2 separate people, you will not speak the same form of English to both of those people. As you further converse with someone, the language you uses will change and adapt as more information is understood about the person. English is used as the base, but it is mutated at runtime (ie. In a conversation) to suit the needs of the receiver.

In other words, this mutation of English is a dynamic user interface, one that adapts based on the context. It turns out that we have technology that can: live in the user’s context, speak English fluently, runs fast on consumer hardware, and can pattern match far better than humans. In case you’re wondering, I’m talking about running edge models and inference.

All in all, edge models and inference are a technology that I believe can power the idea of a dynamic user interface. One might ask, why not cloud models/inference? These models are far bigger and knowledgeable than edge models, so one has to ask why I would accept potentially degraded performance.

My answer to that is more so an engineering answer from an engineering standpoint, in which I would say that the internet is too flaky and slow for the real-time component of generating UIs in response to quick user interactions. Edge models can easily hit generation speeds of >100 tps on the CPU alone given the right configuration, and are not bottlenecked by network concerns. Additionally, it’s best if they operate directly in the user’s context such that we don’t have to send sensitive data across the network.

So yes, privacy and offline support are great reasons for why I’m interested, but only from an engineering standpoint. That is, I see them as more of an implementation detail rather than the ideals themselves.

— 2/4/26

Some Things About Edge Models

I talk with iOS developers sometimes, and FoundationModels is a more popular topic in recent conversations. Notably, Apple is considered to be “behind” in the AI arms race, and primarily I think the reason for this is because of their focus on edge models. Instead of focusing on burning billions in infrastructure costs to fuel the next generation of lobsters running on Mac Minis, Apple has decided that they’ll just run the inference on your phone instead.

One of the things I’ve realized is that most developers and technically enthusiastic users, is that they expect the output of edge models to be on par with GPT-5. Ok, maybe they don’t think that way directly, but certainly my conversations have shown hope for being able to use edge models for the same kinds of applications as cloud models.

To an extent, this opinion is valid. I do believe that most of us developers are throwing the biggest models at every problem (see Opus Spam), when smaller models, or even just basic classifier models will do. However, you’re not going to get good results attempting agentic coding with a model that only has ~3B parameters and a 4K token context window (the primary agentic coding models have at least 100B parameters and and 150k token context length).

I keep hearing hopeful statements for this year’s up and coming WWDC in which we’ll somehow get an edge model on par with the offerings from the big AI labs. Unfortunately, that will likely not happen, at least on the current generation of hardware.

That being said, I think edge models have lots of unique power over cloud models besides the usual privacy and offline statements. One of the things I haven’t talked about publicly yet, is the idea of doing dynamic user interfaces that adapt in real time as a user uses an application. Some may call this “Generative UI”, and there’s even an SDK called Tambo for this, but this SDK misses the main ideas of what I have in mind (In future writings, you’ll see that real dynamic UI is much more than merely tailoring the UI to each user based on a prompt).

I wouldn’t try to use a cloud model for dynamic UI because of either network latency/reliability or because inference speeds are too slow. It’s not uncommon for edge models to reach speeds of over >100 tps even just running on the CPU directly. The network issue is the bigger problem here, because even inference speeds of >1000 tps mean nothing if the user’s network is down.

Another thing to note is that edge models can defeat the bigger cloud models in some tasks, that is if you fine tune them. Any application that seriously uses edge models should be using fine tuned models, and I think this is a hole in the space that should be addressed from available tooling. Most developers are completely unfamiliar with the concept, and would rather be building feature instead of LoRA adapters.

Lastly, one other big idea is remote control. Since edge models run locally on the client, the system prompts are also going to have to be present on the client. However, a hard-coded client-side system prompt that’s dangerous will be incredibly hard to update, especially if you’re deploying to the App Store. Once a prompt is hard-coded on the client, it remains forever, so for that reason it’s ideal to have your app check for system prompts updates at runtime such that you can deploy new prompts without going through app review.

Now of course, you need to ensure that you take appropriate measures to prevent MITM attacks from injecting bad prompts on the client. Prompt injection still is a security problem at the end of the day.

Additionally, observability is also an important aspect. Particularly, you’ll want to address the basics of detecting things like output speeds, confidence thresholds, memory usage, etc. on a per-prompt basis. However, good observability should also embed safety, and therefore act as a NORAD in order to detect warning signs of things going catastrophically wrong. (eg. A system prompt that’s doing more harm than good to a user.)

I’ll have more to say on this topic in future writings. At the very least, edge models are likely to be used as the implementation driver of a lot of my upcoming design work, which is why I’m interested in them.

— 2/3/26

Is One Shotting a Good Idea?

If you’ve been using agents for a decent amount of time now, you’re likely familiar with a work flow that involves creating and iterating on some sort of detailed plan with the agent, and then delegating the implementation to the agent. Often, if the plan is well written enough, the agent can one-shot the implementation, meaning that no follow-up prompts are necessary.

For lots of things, this is great, however I’m concerned about how much this impacts overall systems understanding. If you have an agent one-shot a major feature in a serious project, even if it works, is that really a good idea for long term maintenance?

Of course, for throw-away prototypes or one-off vibe-coded things, this isn’t really much of an issue. My concerns are more related to dealing with larger and more complex systems that can’t simply be vibe-coded with a taking on a huge liability risk.